ChatGPT ads measured: 0.42% of responses — near the ceiling?

ChatGPT ads measured at 0.42% of responses across 8,243 prompts. Google AI Overview ads inside the AI summary at 0.24%. ChatGPT's surface may already be near its ceiling.

Get the real ChatGPT UI responses: sources, shopping cards, brand entities, query fan-out, and citations. All the data the OpenAI API never returns, in markdown, any country, any scale.

4.7 on G2No credit card required.

curl -X POST https://api.cloro.dev/v1/monitor/chatgpt \

-H "Authorization: Bearer sk_live_your_api_key_here" \

-H "Content-Type: application/json" \

-d '{

"prompt": "What do you know about Tesla\'s latest updates?",

"country": "US",

"include": {

"markdown": true

}

}' {

"success": true,

"result": {

"text": "...",

"sources": [],

"html": "...",

"markdown": "...",

"searchQueries": [],

"shoppingCards": [],

"entities": []

}

} cloro runs more ChatGPT requests than any other provider, and the same key works across many other SEO and GEO engines.

We run more than 100 million ChatGPT requests every month, the largest dataset of real ChatGPT UI responses on the market. Here is what we have learned that the OpenAI API will not tell you.

ChatGPT's anti-automation hardens weekly. Rotating proxies and headless browsers buy you about a day before throttles hit. cloro handles the cat-and-mouse so your monitoring keeps running when DIY pipelines break.

ChatGPT with Web Search re-ranks sources per session, country, and prompt phrasing. A single API call tells you nothing about coverage. cloro runs the same prompt across regions and tracks citation drift over time so you see the real distribution.

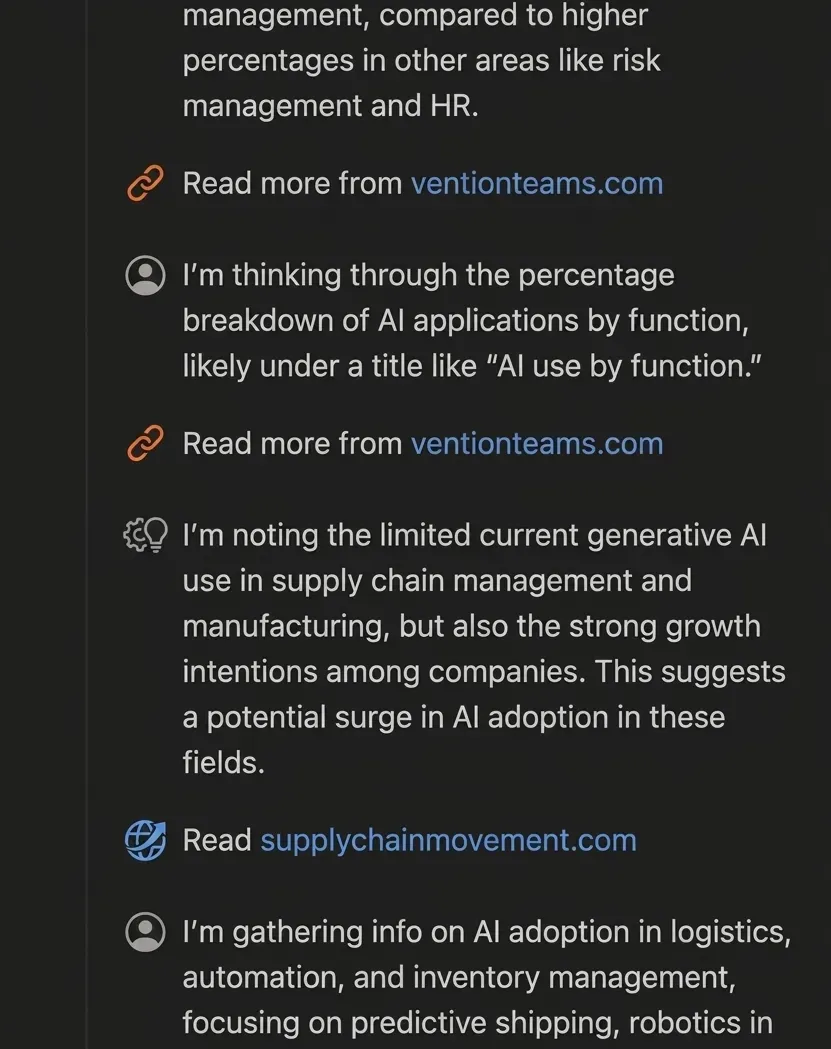

The OpenAI API returns the model output. ChatGPT-the-product wraps it in web search, source citations, shopping cards, and entity panels: the exact surfaces that determine whether your brand gets mentioned. Surfer's analysis found only ~20% overlap between API responses and what users actually see in the ChatGPT UI.

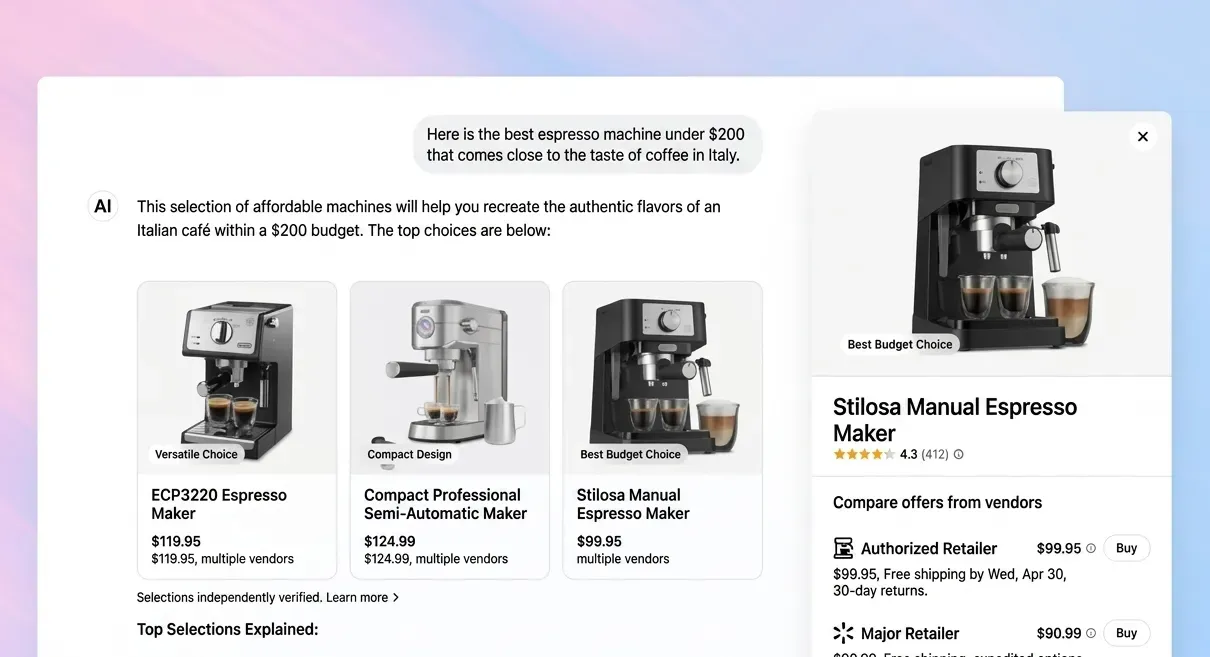

ChatGPT renders far more inline UI than text and citations: shopping cards, inline products, maps, entities, ads, and query fan-outs. None of this lives in any OpenAI endpoint. cloro returns each as structured JSON.

Parse markdown, sources, shopping cards, entities, and query fan-out from one endpoint.

import requests

response = requests.post(

"https://api.cloro.dev/v1/monitor/chatgpt",

headers={

"Authorization": "Bearer sk_live_your_api_key_here",

"Content-Type": "application/json"

},

json={

"prompt": "What do you know about Tesla's latest updates?",

"country": "US",

"include": {

"markdown": true

}

}

)

print(response.json()){

"success": true,

"result": {

"text": "Tesla's recent updates...",

"model": "gpt-5-3",

"sources": [

{

"position": 1,

"url": "https://tesla.com/updates/fsd",

"label": "Tesla FSD Updates",

"description": "Latest Full Self-Driving..."

}

],

"html": "<div class=\"markdown\"><h3>Tesla Recent Updates</h3><p>Tesla's recent updates...</p></div>",

"markdown": "### Tesla Recent Updates\n\nTesla's recent updates include significant improvements...",

"searchQueries": [

"Tesla updates 2024"

],

"shoppingCards": [

{

"position": 1,

"product": {

"name": "Model Y",

"brand": "Tesla",

"price": "$43,990",

"currency": "USD",

"rating": 4.5,

"reviewCount": 2847,

"imageUrl": "https://example.com/tesla-model-y.jpg",

"productUrl": "https://tesla.com/modely",

"description": "All-electric compact SUV..."

}

}

],

"entities": [

{

"name": "Tesla",

"type": "company"

}

]

}

} Pick a plan that fits your volume. Price per credit drops as you scale.

Credit cost per request varies by provider. The rates below apply to async/batch requests; sync requests add a +2 credit surcharge.

Google News uses the same pricing as Google Search.

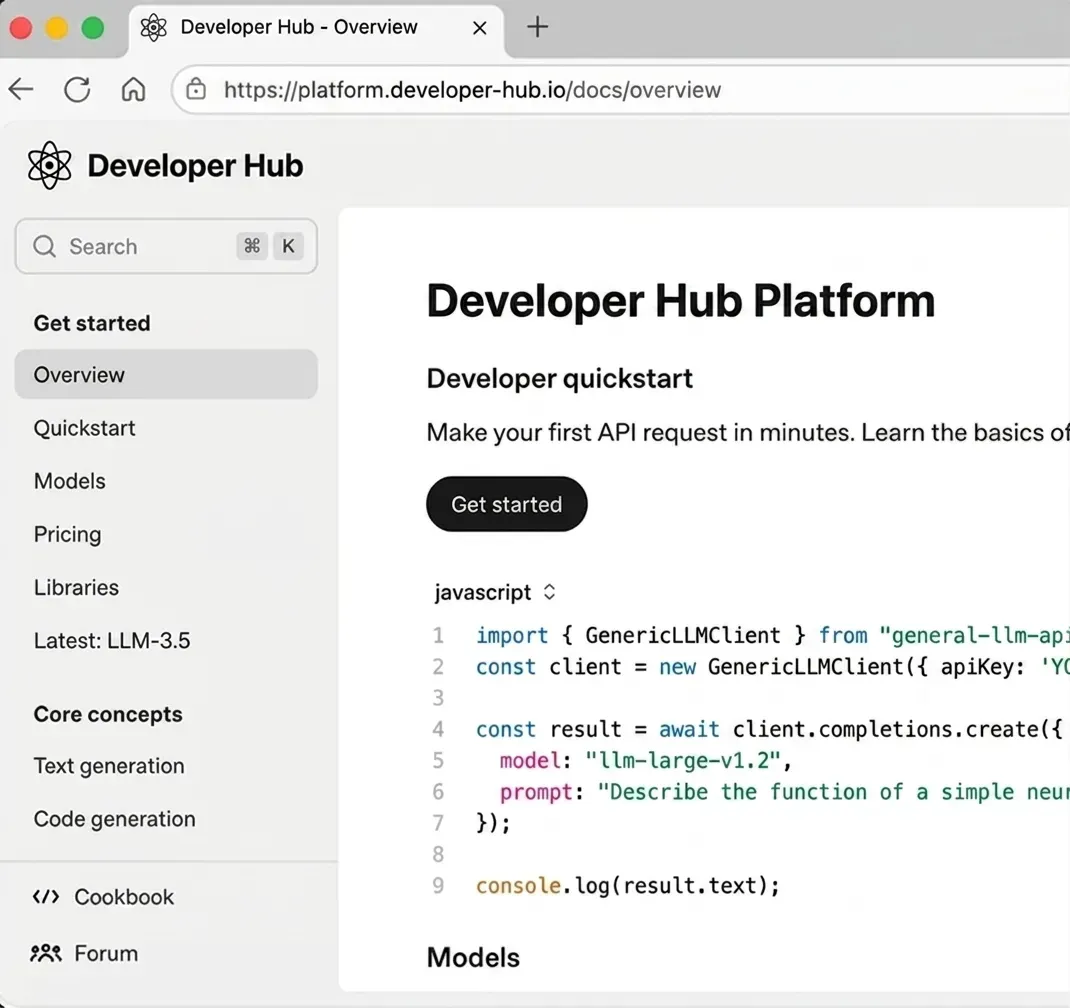

Because the OpenAI API and ChatGPT-the-product are different surfaces. The API returns model output without the web search results, source citations, shopping cards, or entity panels that determine whether your brand actually shows up to users. cloro extracts what ChatGPT users see, not what the model would say in isolation.

Each request runs against ChatGPT live. Typical response time is 30–45 seconds. There is no caching layer between you and the model, so the citations you see are the citations a real user would see at that moment.

Yes. Pass `country` (any ISO-3166 code) and cloro routes the request through that locale. Citation distributions shift meaningfully between US, EU, and APAC for the same prompt, so country-level monitoring is the only way to see regional brand-visibility gaps.

Quite a lot: `shoppingCards` (grouped products with offers), `inlineProducts` (individual product refs with render hints), `entities` (brands and products), `map` (business/place data with ratings and contact info), `ads` (advertiser branding cards), `citationPills` (inline citation metadata), plus the full `searchQueries` query fan-out (returned on every request across both current models). All extracted from the rendered ChatGPT UI; none available through any OpenAI endpoint. Each returns as structured JSON in `result`.

Use the async endpoint (`POST /v1/monitor/chatgpt/async`). Submit the task and receive results via webhook. This avoids holding open connections and is the right pattern for thousands of prompts per day. Your concurrency limit on the sync endpoint depends on your plan.

ChatGPT often issues multiple internal search queries to answer a single user prompt. The `searchQueries` array exposes those, so when you ask 'best CRM for startups' you also see the underlying queries ChatGPT ran ('crm comparison 2026', 'startup crm pricing'), which are the keywords your content must rank for to be cited.

Each `result` payload includes `model`, currently either `"gpt-5-3"` or `"gpt-5-3-mini"` depending on which variant ChatGPT served the prompt. Both models behave consistently for monitoring purposes: full source citations, `searchQueries` fan-out, shopping cards, entities, and the rest of the UI surface come back regardless of which one routes.

Direct scraping requires rotating residential proxies, headless browser farms, and a maintenance team to chase ChatGPT's anti-automation updates. Most teams spend $3–8k/month on infra alone before the engineer time. cloro starts at $100/month with 250k requests included and zero infra to run.

ChatGPT ads measured at 0.42% of responses across 8,243 prompts. Google AI Overview ads inside the AI summary at 0.24%. ChatGPT's surface may already be near its ceiling.

Learn proven methods to monitor when ChatGPT mentions your brand, track competitor activity, and improve your AI search presence.

We tested 12 LLM visibility tracking tools on real brand-monitoring workflows across ChatGPT, Perplexity, Gemini, and Google AI Overview. What works, what doesn't.