LLM Visibility Tools: 12 Tested for AI Search

We tested 12 LLM visibility tracking tools on real brand-monitoring workflows across ChatGPT, Perplexity, Gemini, and Google AI Overview. What works, what doesn't.

Get the real Grok responses with rich source metadata, including all the data the xAI API never returns. Markdown out, any country, any scale.

4.7 on G2No credit card required.

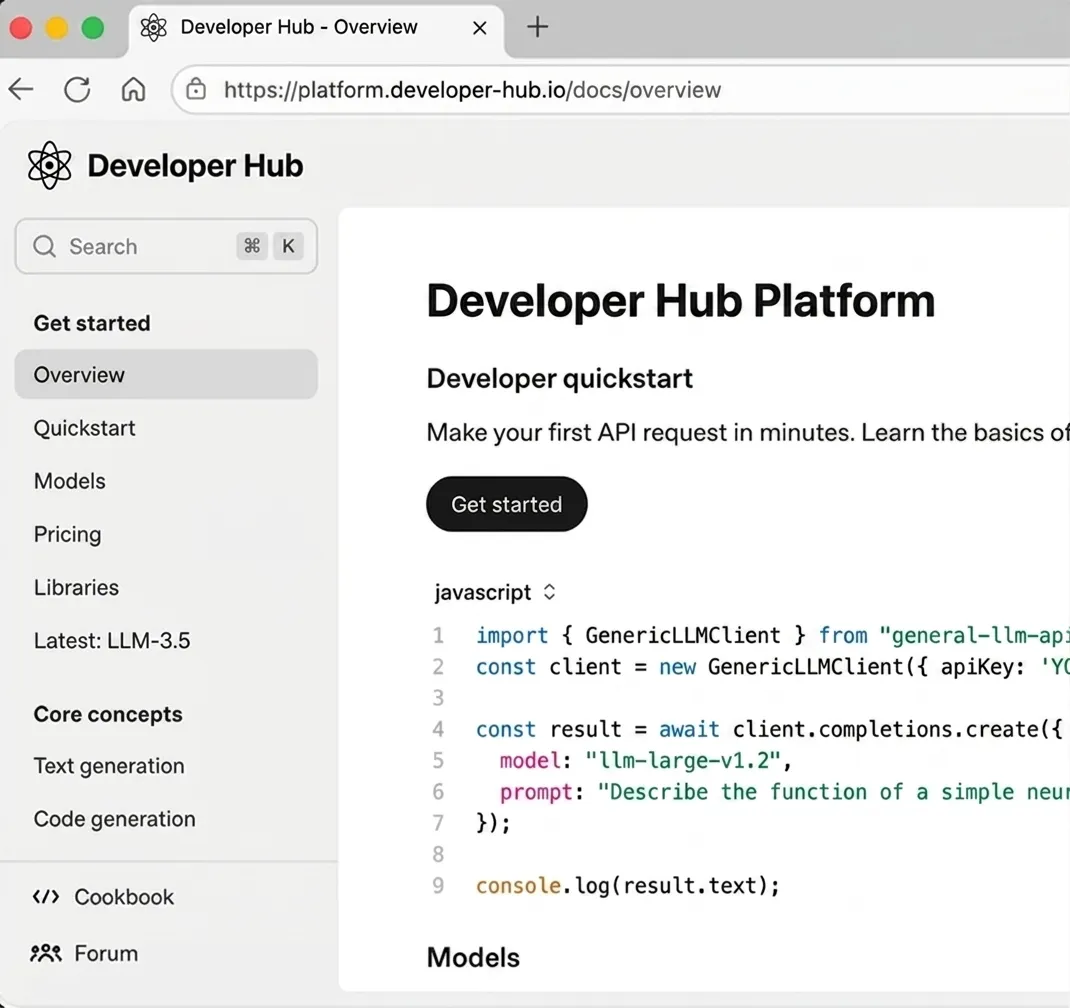

curl -X POST https://api.cloro.dev/v1/monitor/grok \

-H "Authorization: Bearer sk_live_your_api_key_here" \

-H "Content-Type: application/json" \

-d '{

"prompt": "What are the latest developments in quantum computing 2026?",

"country": "US",

"include": {

"markdown": true

}

}' {

"success": true,

"result": {

"text": "...",

"model": "grok-4-auto",

"sources": [],

"html": "...",

"markdown": "...",

"searchQueries": []

}

} cloro extracts Grok's X-grounded answers with full source metadata. The same key also gets you ChatGPT, Perplexity, Gemini, AI Overview, AI Mode, and Copilot.

Grok is the only AI search engine wired into a real-time content stream (X). That makes it the most volatile surface to monitor and the one richest in trending-brand signal. The xAI API ships with rate-limit caps that make sustained brand monitoring impractical.

X runs some of the most aggressive anti-automation on the public web, and Grok inherits that defense. DIY pipelines measure uptime in hours rather than days, and burn through six-figure proxy budgets to maintain sub-50% success rates. cloro absorbs the access fight so sustained Grok monitoring is actually possible.

Unlike ChatGPT or Gemini, whose grounding indexes refresh in days or weeks, Grok cites X posts published in the last 30 minutes. The same prompt run twice an hour apart returns substantially different citation sets. A daily snapshot misses nearly everything. Hourly sampling is what catches the trending content layer that drives Grok's brand-visibility signal.

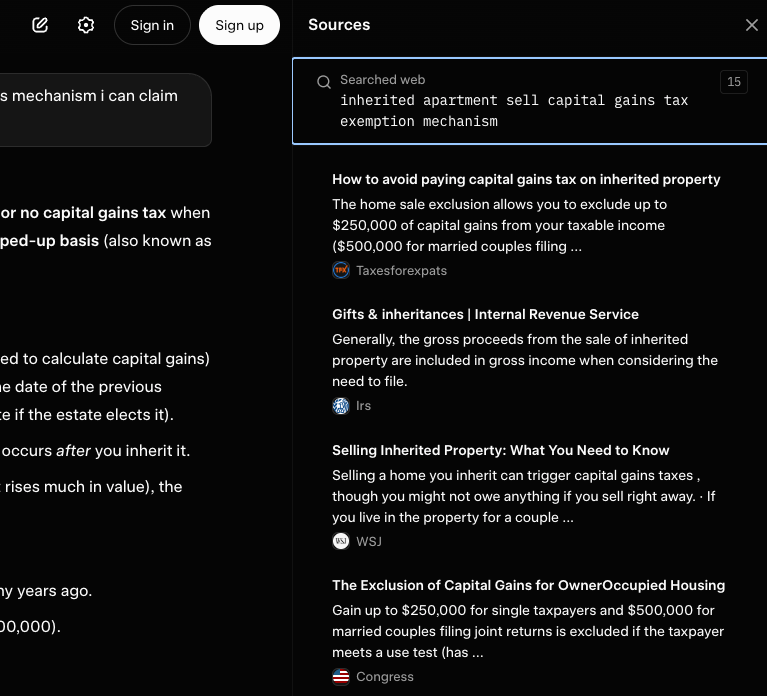

The xAI API is invitation-gated, rate-capped, and returns model output without the rich source metadata. Surfer's analysis measured ~20% overlap between API responses and the rendered UI across LLMs.

Grok citations carry fields no other AI engine exposes. These are trust signals your dashboards should use to evaluate citation quality. None of it lives in any API endpoint. cloro returns all of it as structured JSON.

Parse markdown, rich source metadata (creator, preview, image, favicon), and citations from one endpoint.

import requests

response = requests.post(

"https://api.cloro.dev/v1/monitor/grok",

headers={

"Authorization": "Bearer sk_live_your_api_key_here",

"Content-Type": "application/json"

},

json={

"prompt": "What are the latest developments in quantum computing 2026?",

"country": "US",

"include": {

"markdown": True

}

}

)

print(response.json()){

"success": true,

"result": {

"text": "Recent developments in quantum computing include breakthrough error correction methods, increased qubit stability, and practical applications in cryptography...",

"model": "grok-4-auto",

"sources": [

{

"position": 1,

"url": "https://example.com/quantum-breakthrough",

"label": "MIT Technology Review",

"description": "Scientists achieve 99.9% qubit fidelity in room temperature conditions..."

}

],

"html": "...",

"markdown": "**Recent developments in quantum computing** include breakthrough error correction methods...",

"searchQueries": [

"quantum computing breakthroughs 2026",

"qubit fidelity room temperature"

]

}

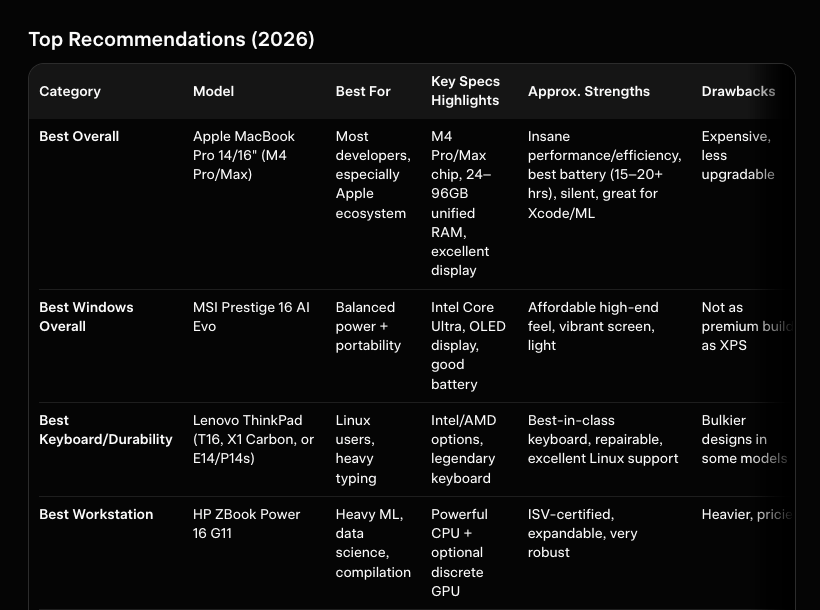

} Pick a plan that fits your volume. Price per credit drops as you scale.

Credit cost per request varies by provider. The rates below apply to async/batch requests; sync requests add a +2 credit surcharge.

Google News uses the same pricing as Google Search.

Two reasons. First, xAI access is gated, so you may not get the rate limits brand monitoring requires. Second, the API returns model output without the rich source metadata (creator, preview, image, favicon) that Grok's UI surfaces. cloro extracts the full rendered surface and abstracts the access layer.

Grok cites X posts as recent as the last 30 minutes. For brand monitoring, hourly or every-2-hour sampling is the floor. Daily is too slow for trending content. cloro doesn't charge differently for higher cadence: same cost per request whether you query monthly or hourly.

Each citation includes URL, label, description, plus Grok-specific fields. No other AI search engine exposes this level of metadata. cloro returns all of it as structured JSON.

Every Grok request cloro runs hits the live grok.x.com surface, with no intermediate cache and no stale snapshot. Typical end-to-end latency is 30-60 seconds. If you query Grok at 10:15 about something that broke at 10:10, the citations you get are the citations a real Grok user would get at 10:15. That's the only useful behavior for trend monitoring.

Less than Gemini or Copilot, because X is a single global graph rather than a federated index. The *trending* content layer is heavily region-skewed though: what's hot on X in JP at 9 AM is invisible to a US-only sample. Pass `country` per request to capture the regional trending mix that drives a meaningful chunk of Grok's citation list.

For brand or topic monitoring, hourly is the floor and 15-minute is the ceiling. Anything slower misses trending content. Anything faster wastes budget on duplicates. Hourly × every tracked prompt × 24 hours adds up, so fire the batch via `POST /v1/monitor/grok/async` and ingest webhooks. Sync polling at this cadence is impractical.

X runs some of the most aggressive anti-automation on the public web. DIY pipelines measure their uptime in hours rather than days. We've watched in-house Grok scrapers burn through six-figure proxy budgets just to maintain sub-50% success rates. cloro absorbs that fight. The Hobby plan ($100/month) covers the workload at a steady success rate that DIY teams can't reach at any price.

We tested 12 LLM visibility tracking tools on real brand-monitoring workflows across ChatGPT, Perplexity, Gemini, and Google AI Overview. What works, what doesn't.

From Perplexity to ChatGPT Search, AI search engines are replacing traditional keywords with conversational answers. Here is everything you need to know about the shift to answer-first discovery.

AI search tracking in 2026 means monitoring your brand across 7+ engines, not just ChatGPT. Here's the practitioner's playbook — what to measure, how often, and which tools work.